A bolt of GIS-Manna from Heaven

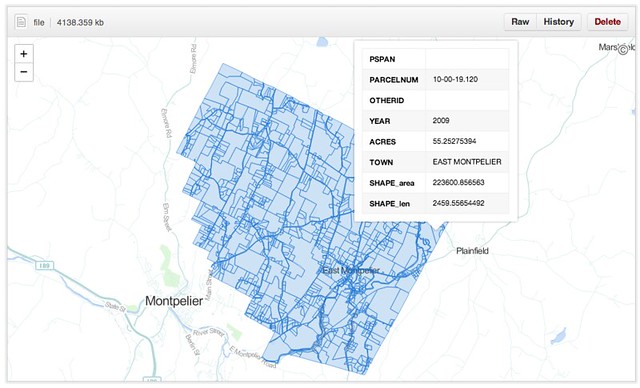

A dedicated and talented GIS developer at the VT Agency of Natural Resources just finished compiling the most-comprehensive cadastral dataset to which our small state has ever had access. Coincidentally he sent out the announcement while I was annoying a few githubbers about their new geodata capabilities. What luck: a test case.The dataset - as a shapefile - clocks in at 250MB, representing 289,000 parcel polygons in 191 towns. [The 46 VT towns not included tend to have populations under 200 and a blithe commitment to dusty paper records.] It turns out that this is over the 100MB limit imposed on individual files, so I pulled the dataset, split it by town and converted the outputs to geojson, then pushed them to a repository I just set up. The largest of these is juuuuust over the maximum file size to be renderable in Github's snazzy new Mapbox-based interactive maps, but most of the town parcel maps pop up just fine.

The Implications

Okay, great - another slippy map somewhere on the web. Who cares? These folks, actually:- A landowner who wants to look up the parcel numbers of her abutting properties without visiting the steam pipe distribution venue in the basement of her local town hall.

- A town GIS manager who has a hard drive overflowing with parcel shapefiles, converted CAD drawings and map requests from lawyers.

- Probably a lot of lawyers. Don't get me started.

- The overworked data gurus at the Vermont Center for Geographic Information (VCGI), who - while they're probably the only agency that can handle it - are really reluctant to take on management of a statewide, rolling, versioned database.

What Github provides in this test case, for free:

- Fast hosting of modestly-large geospatial data

- Relatively-simple version control of said data

- Edit access to anyone with a github account and a GIS platform (FOSS or Arc'ed)

- An embeddable client view for the public for files up to 10MB

- A robust API for client views bigger than that (for example)

- A muthaflippin' download button (well, "save page as")

For those of us who have been ranting about geoportals in recent months, this pretty much covers the bases. For free. Github is walking the #opendata walk.

What's Next . . .

There are a whole bunch of caveats here. Biggest is the file size issue (though I've seen Bill Dollins and Sophia Parafina starting to work around that in the past few days), since 10MB is a fine limit for municipalities in the second-smallest state, but Manhattan is a teensy bit bigger. Another hitch is data quality; this dataset is top-of-the-line, but like any other it's missing SPAN numbers, dates and acreages here and there. The vendors will tell you that quality can't be beefed up in a free collaborative environment, without data value-add. I don't know if they're right or wrong.

But I'd love to see a town GIS manager throw a pull request and get this ball rolling. Who's up for it?

The data hub is waiting right here

Update: 7/25/13

I've heard a bunch of awesome questions about the practicalities of using Github this way, so while I am by no means a power user I recorded a quick demo video.

The data hub is waiting right here

Update: 7/25/13

I've heard a bunch of awesome questions about the practicalities of using Github this way, so while I am by no means a power user I recorded a quick demo video.

If pigs had wings. . . .

ReplyDeleteYours is a vision to quicken the pulse, but what of a host of potential problems:

1. The first, of course: the relative technical competence of multiple "authors." Some, with the best of intentions, could introduce errors that impact others and need to be unwound;

2. Security -- You mean that I can go in and screw around with parcels from East Overshoe? How will they feel about that, and how do we prevent it and make it easy to unwind?

3. Q/A -- there are relevant legal/tax and GIS standards that apply to this data. How do all the potential "editors" know about them, and what is the workflow for validating changes?

4. Oh, I don't want to be a bummer, so I'll quit now. But seriously, this is a dataset with important legal/tax implications, and the town data sets are considered "authoritative" at the local level. I just don't see how an open, communal, integrated dataset can retain that pedigree. It could be very useful for other applications, but not for the purposes for which the towns invested in the original local datasets.

Heh. Paper-mache can work wonders for pig wings :) But to your points:

Delete1.) Github isn't free-for-all crowdsourcing. Any changes must be approved by the repository owner, and that process is very smooth. For parcel data, the repo owner might only accept changes from town/regional GIS managers or designated surveyors. The point here is to make the "write" part easy for those involved, while giving "read" access to an even broader audience.

2.) Nope. And if a trusted source makes an honest mistake it's one click to revert to a previous version.

3.) Github doesn't change this part of the process; parcels are almost always surveyed, drawn and approved by professionals and local officials before they ever make it into a GIS data format.

4.) Keep it coming. There are legitimate weaknesses to this approach, but I'd love to get them in the daylight and figure them out. Thanks for writing!

You could easily run your largest dataset through topojson command line and shrink it down to less then 2mb. https://github.com/mbostock/topojson/wiki/Command-Line-Reference

ReplyDeleteTotally - Ben already pointed me in that direction. The reason I avoided it is that (to my knowledge) OGR doesn't natively support TopoJSON, so it becomes inaccessible to the GIS audience when they can't get it into ArcMap or QGIS.

DeleteBill - where did you download load the original shape file? "The dataset - as a shapefile - clocks in at 250MB, representing 289,000 parcel polygons in 191 towns." the Github repository is interesting and the viewer is nice. I am not sure how the GeoJASN files are going to work and how they will be consumed, I am still getting my arms around that part. - Nancy

ReplyDeleteHi Nancy - Erik Engstrom at VT ANR compiled the shapefile from maaaaany sources. It may still be available here, but I don't think his intent was to serve it forever: http://anrmaps.vermont.gov/websites/public/CadastralParcels_VTPARCELS.zip

DeleteYou'll find that QGIS works well with GeoJSON, but it's a bit trickier in Arc, though someone already took a shot at it: http://arcscripts.esri.com/details.asp?dbid=15545

Hi Bill,

ReplyDeleteVery interesting read. Something that may be of interest to you is AmigoCloud ArcGIS OGR plugin. This will allow you to directly ingest (actually visualise them within ArcMap as a layer) and edit GeoJSON files directly in ArcMap. I haven't personally attempted editing them, but I have been able to view the files no problems.

https://github.com/RBURHUM/arcgis-ogr

James

Here is a little bit more info on the Amigo plugin from another blogger.

ReplyDeletehttp://blog.geomusings.com/2013/01/22/checking-out-the-gdal-slash-ogr-plugin-for-arcgis/

Quote: "Because the layers are added using a plug-in workspace, they are full read-only citizens inside ArcGIS. For example, I was able to wire up a couple of models using ModelBuilder and execute clips (clipping a GeoJSON layer with a SpatiaLite layer) and buffers and simple tests"

followed by

Quote: "The resultant buffer was written to a file geodatabase"

So this would go to say that the script that you have provided a link to would work perfectly in combination with this Add-on for ArcGIS allowing Arc users to manipulate data without needing to fire up another application (not that I would be steering people away from Open Source tools or anything like that). I am sure a small tool can be written to rig up that script to a button inside of ArcMap and allow a seamless work-flow for general GIS users.

Also if you have any Step by step instructions or tutorials on how you set up this via Github I would be very interested in learning to test what I have just posted about. Thanks